It would be nice if we could rely on software to run forever without failing, but unfortunately, software failures happen, and it’s almost certain that any software you use will suffer from performance issues at one time or another. The best step you can take to prepare and recover is to keep yourself informed about your software’s performance. By monitoring the right metrics, you can position yourself to respond quickly and decisively to mitigate the harm a software failure could cause your business.

How to Use Metrics to Drive Business Success

Making the best use of the software performance metrics you gather requires you to ask questions. Not questions about metrics, but questions about your business that metrics can answer.

The figures that actually matter are conversion rates, customer engagement, revenue generation, and other insights that tangibly quantify your business’s level of success. Use the performance metrics you gather to learn how your software’s performance quality affects your conversion rates, customer engagement levels, and revenue generation. Then, you can act on those lessons by improving software performance where it will have the greatest effect on business success.

Know What You Are Measuring

To select the right metrics to use, you need to know what questions you are trying to answer. Performance metrics should always be used as a way to answer questions about your software’s effect on your business. The numbers themselves are not nearly as important as the actual impact they have on business success. Whether or not the measurements you take actually offer any value depends on how you use them to make decisions about performance improvements.

Following are some of the most important metrics to watch. You should track these metrics continuously and use them to inform gradual performance improvements.

Performance Metrics

You can use metrics for performance analysis to track your software’s stability and to measure the impact that performance failures are having on the business. There are three crucial performance metrics that you should be paying attention to—mean time between failures, mean time to recover, and crash rate. These metrics are closely related to one another and are used to measure a software system’s current performance quality.

Mean time between failures (MTBF)

Mean time between failures is a measurement of the length of time a software system can function before failing and requiring maintenance. It is usually measured in hours.

You need to track three numbers over a given period of time to calculate your software’s MTBF: The number of hours for which the software was fully functional, the number of hours the software was under repair, and the number of times the software failed. If you subtract the hours under repair from the hours functional and divide by the number of failures, you will arrive at the software’s MTBF.

Mean time to recover (MTTR)

Mean time to recover is a measurement of the length of time your software is out of operation, or in other words, the length of time it takes to repair the software in the event of a performance failure. It is also sometimes called mean time to repair or mean time to respond, but the metric is the same.

Measuring MTTR is a little more straightforward than measuring MTBF. To calculate your software’s MTTR, simply calculate the average downtime per failure Track the total number of hours the software is under maintenance and unavailable to use over a given time period, as well as the number of failures that occur in that time period. Then, divide the number of hours the software was down by the number of incidents.

Crash rate

Crash rate is a measurement of how often software fails. Like mean time to recover, your software’s crash rate is represented as a simple average. To calculate it, divide the number of times the software failed over a given period of time by the number of times it was used.

These three metrics can provide a starting point for assessing your software’s performance, but all data requires context. The numbers aren’t useful at face value—they only become useful once you understand what those numbers are telling you about your software and its effect on your business specifically. A MTTR of 24 hours could cause an enormous loss of revenue if a critical system goes down at a very large company, but in other scenarios, a MTTR of 24 hours might be perfectly acceptable.

Other Metrics That Affect Performance

Security Metrics

Security metrics are often forgotten or neglected. After all, a security threat is just the possibility of a future problem, and there are likely other, more immediate problems that are being prioritized. But once a security breach occurs, it’s too late. If you care about security metrics now, you can avoid putting yourself in a tight spot later.

Best practices in software security is a vast topic in its own right, but you can easily monitor these two metrics to help you decrease downtime due to security issues:

Endpoint incidents: An endpoint incident is when an endpoint, or a device being used to run your software, experiences a security breach such as a virus infection. Tracking the number of endpoint incidents over a given period of time can tell you whether or not poor security is having a significant effect on your software’s performance quality.

Mean time to recover (again): Mean time to recover is also an important metric in the context of security. Sometimes, a security breach requires the software to be taken offline completely while a solution is being implemented, so the average amount of time your software is spending recovering from threats instead of being used is an important metric to have on your radar. Software cannot perform reliably if it frequently falls victim to security breaches.

Productivity Metrics

Productivity metrics provide a way of measuring how effectively performance issues are being addressed. Whether you are making improvements to your software in-house or working with a third-party developer, a productive performance improvement cycle is essential for keeping maintenance costs low.

Cycle time: Cycle time is a measurement of how long it takes to make an improvement to a software system. This metric is similar to mean time to recover, but the difference is that cycle time measures the time it takes to improve software, while MTTR measures the time it takes to repair software.

Open/close rate: A software application’s open/close rate measures the number of performance issues that are discovered and fixed over a given period of time. This metric is similar to mean time between failures, but it focuses on the total number of failures that occur rather than how often they occur.

Putting the Pieces Together

When you put all of these metrics together, you have an ongoing estimation of your software’s performance risks and the potential impact of performance failures. Now, you can use the data you’ve gathered to answer the question: how do these insights affect the success of the business, and how can I leverage them to improve software performance?

Nearshore Software Development Services

Tracking performance metrics is the best way to monitor your software’s overall health so that you can make continual improvements that will positively affect your business’s productivity and profitability. However, while tracking and interpreting metrics is easy enough, making the improvements is not always so simple.

If you would rather hire a team of experts to optimize your software for you, consider utilizing a nearshore software development company.

KNDCODE is a team of certified professionals who can use their 20+ years of industry experience to help you optimize your software’s performance. KNDCODE can help your business handle just about any software development challenge, from testing, to integrations, to mobile applications, and more.

KNDCODE, always ahead, forward, near.

Ebooks

KNDCODE'S eBooks your gateway to knowledge and expertise in software development. Our curated collection of insightful and practical eBooks covers a wide range of topics, helping you enhance your skills and unlock your full potential. Our free eBooks provide valuable insights, best practices, and real-world solutions to empower your career in the ever-changing world of software development.

Software Development Myths

Here are 8 common software myths and the truth behind each of them.

Read more

KNDCODE Credentials

Solutions to the most complex operational challenges.Learn more about our capabilities.

Read more

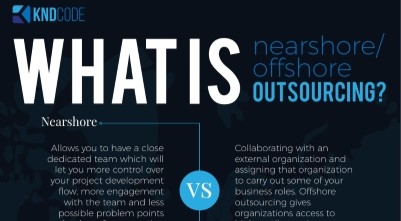

Nearshore vs Offshore SD

Nearshore vs Offshore Software Development. What's the difference?

Read more

Hiring Guide (Dev)

Have access to the complete guide to effective Software Development hiring

Read more